old tools

(discontinued, but hopefully still working)

jREC is a JAVA+Prolog-based tool for reasoning upon the dynamics of an event-based system with respect to an Event Calculus specification. More specifically, jREC dynamically acquires the event occurrences characterizing a running execution of the system and monitors their effects by updating the evolution of the corresponding fluents (i.e. properties varying over time) according to an Event Calculus theory. jREC is based on a lightweight axiomatization of Chittaro and Montanari's Cached Event Calculus (see the paper Efficient Temporal Reasoning in the Cached Event Calculus), which captures a form of proactive reasoning where the current fluents' maximal validity intervals are cached and then updated/revised as new event occurrences get to be known. In this way, the reasoner avoids restarting from scratch every time a new event happens (which is the case of classical EC deductive reasoners), thus tracking the system's execution efficiently.

jREC combines two modules:

- jREC (core), the reasoner;

- jREC UI, a user interface that graphically depicts the evolution of fluents in response to events.

In addition, a

jREC tester is available as a runnable JAR.

Two other tools are based on jREC:

MOBUCON and

ComMon.

jREC is the core package containing the reasoner interface, a reasoner implementation consisting of a lightweight Prolog-based axiomatization of the Cached Event Calculus and TuProlog as the Prolog engine, and the JAVA models for storing execution traces and the evolution of fluents.

The Event Calculus ontology (i.e. the predicates supported by the calculus and that can be defined and/or used in the domain-dependent theory) of jRec contains the following predicates:

-

initially(F) - fluent F holds in the initial state;

-

initiates(E,F,T) - event E is able to make F true at time T;

-

terminates(E,F,T) - event E is able to make F false at time T;

-

happens(E,T) - event E occurs at time T (also used ground inside the description of an execution trace);

-

holds_at(F,T) - fluent F is true at time T (usable only in the body of a clause as a tester);

Furthermore, jREC adopts a specific binary term

status/2 to model multi-valued fluents, where

status(F,S) means that fluent

F has current value

S. A simple domain-dependent theory can be downloaded

here.

The reasoner interface RecEngine contains the following methods:

-

public boolean setModel(String model) throws Exception - sets a domain-dependent theory (embedded in a string).

-

public FluentsModel start() throws Exception - starts the reasoner; the returned fluents' model contains all the fluents that hold initially.

-

public FluentsModel evaluateTrace(RecTrace trace) throws Exception - evaluates a chunk of event occurrences (i.e. a partial or complete trace) extending/revising the previously computed result and returning the fresh one; if the trace is closed, then the reasoner clears the cached result. Note that event occurrences can be also delivered out of order.

-

public long getStats() - returns the ms needed by the reasoner to process the last chunk of events.

-

public boolean shutDown() throws Exception - shuts down the reasoner.

Download

The sources of jREC can be found

here. The tool depends on

TuProlog and on

Log4J. Recall that the the tool is a research prototype, is undocumented and will print a lot of nonsense debug messages.

A new, more mature version of the tool is likely to be implemented soon.

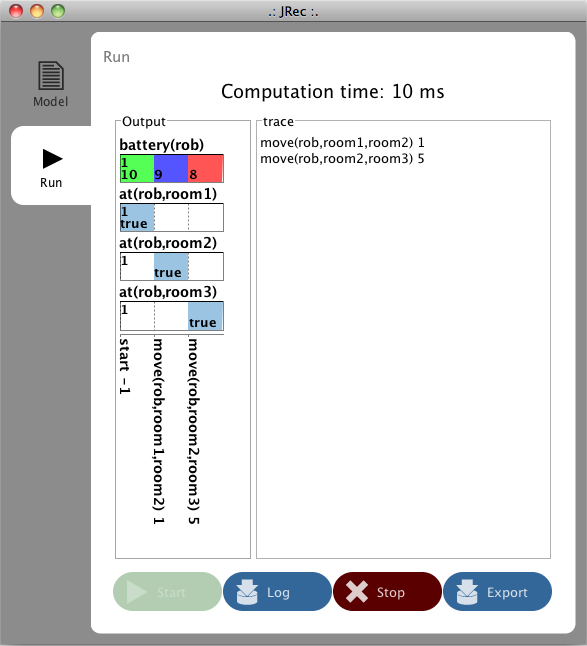

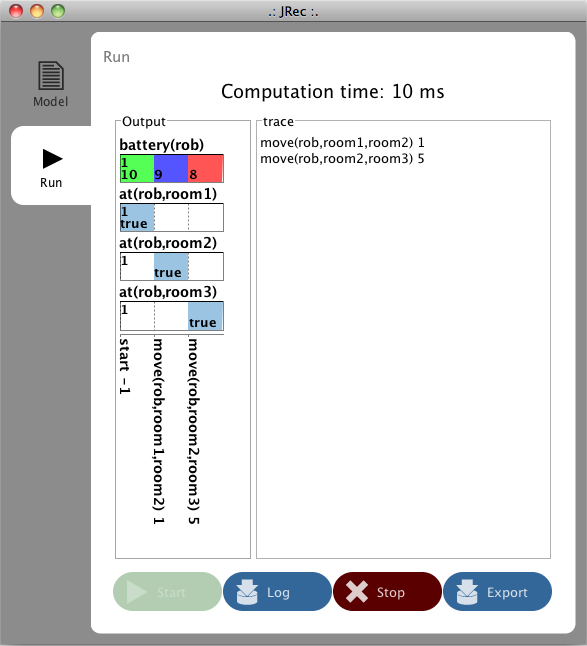

jREC UI is a user interface package for the visualization of a fluents' model. Each "normal" fluent is visualized in a row, showing the time intervals in which it holds; all the status fluents referring to the same fluent are packed in the same row, supposing that each fluent can be in a single status at a given time.

Download

The sources of jREC can be found

here. The package depends on

JFreeChart, on the ui package

SlickerBox developed by

Fluxicon, and on the two ui packages

UItopia and

UITopiaResouces (see

ProM 6). Recall that the package is still under development.

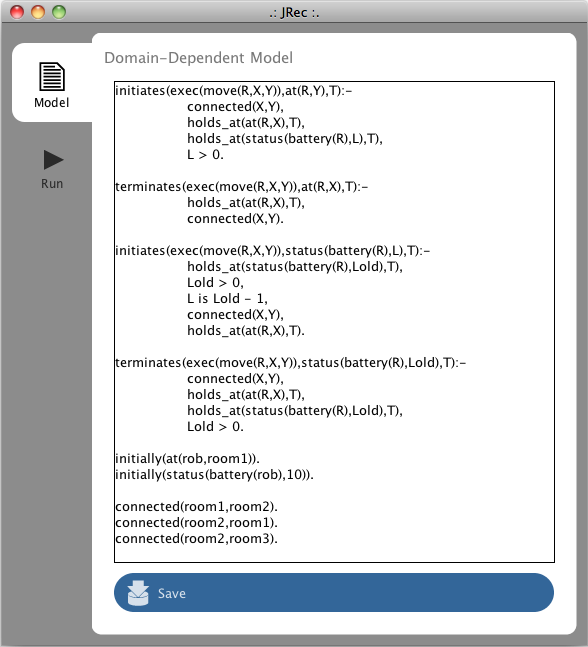

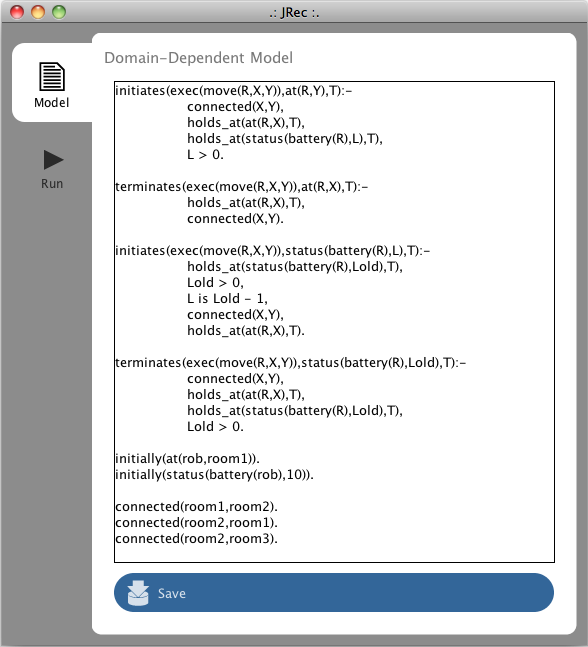

jREC Tester is a simple tester tool for jREC. It contains a runnable JAR which is composed of the tester, jREC UI and jREC core, together with the needed libraries. It also contains a resources subfolder with two property files for the coloring of normal and status fluents and with the file containing the domain-dependent theory. The screenshots above show the modeling and run panels of the tester. Note that:

-

The

save button will always work on that file (overwriting it). In order to preserve a certain domain theory, copy the model.txt file.

-

The domain-dependent theory must use term

exec(Event) for representing events, while event occurrences are simply given in the run panel as Event Time, one per row.

-

Each time the

log button is pressed, all the event occurrences contained in the panel are delivered to the reasoner.

Download

jREC Tester can be downloaded

here. Unzip the file and simply double click on the JAR, without changing the name of the subfolder and of the resource files contained inside it.

Acknowledgements

jREC has been developed by Marco Montali during his Post-Doc within the

AI group at DEIS, University of Bologna.

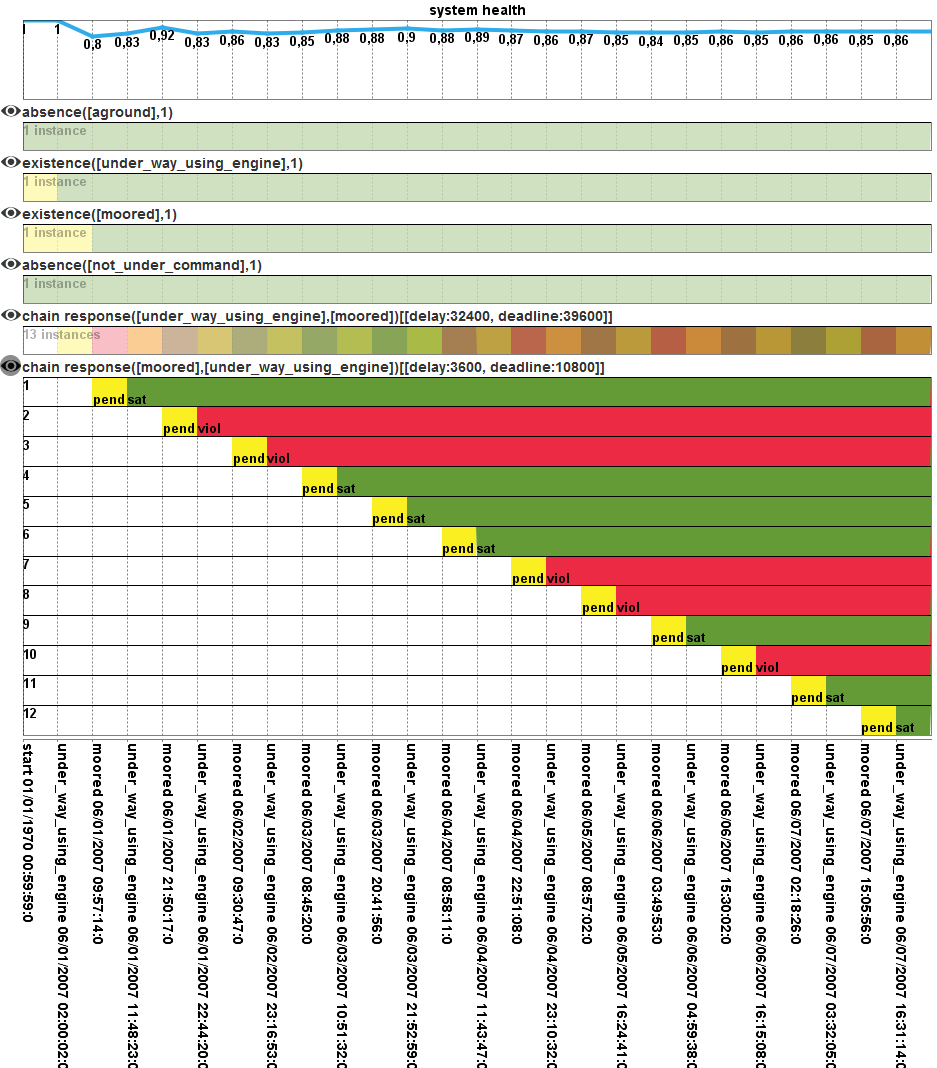

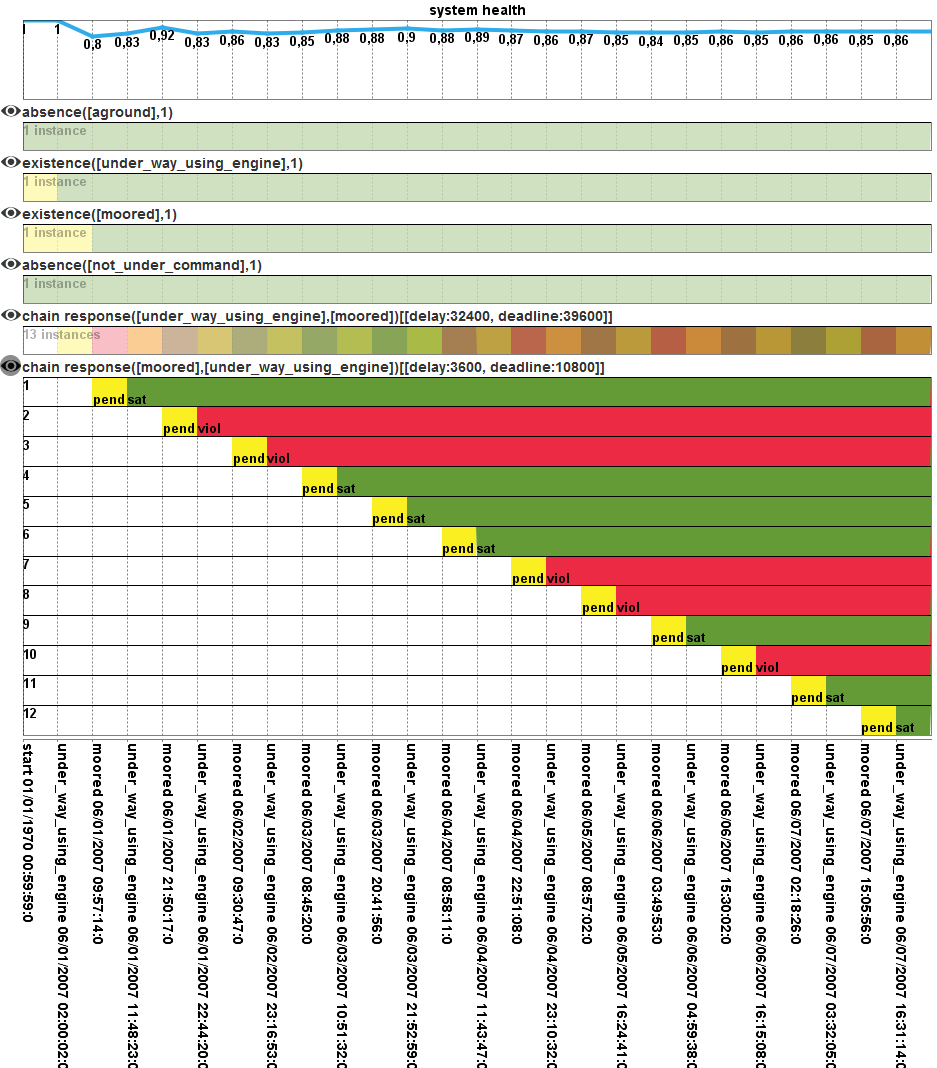

MOBUCON is a tool for the runtime monitoring of event-based systems with respect to business constraints. It tracks a running execution trace showing how it instantiates the business constraints of interests, reporting for each instance whether it is currently satisfied (i.e. the execution trace complies with it), pending (i.e. further events are needed to achieve compliance) or violated (i.e. the execution trace does not comply with it). More specifically, MOBUCON is composed of:

- an Event Calculus-based axiomatization of CLIMB constraints, i.e., ConDec constraints extended with metric temporal aspects, data and data-aware conditions;

-

jREC as the underlying dynamic reasoner;

-

an operational decision support provider for ProM 6 for wrapping the reasoning facility and offering it through the ProM operational support service, together with a plug-in for parsing ConDec models exported from the DECLARE system.

-

two test clients for delivering events to the MOBUCON operational support provider, one supporting the manual insertion of events, the other loading traces from a XES event log; the clients graphically report the evolution of the business constraint instances, together with a metric measuring the health of the system (i.e., "how much" it complies with the business constraints).

A description of the framework can be found in our

FASE 2012,

ACM TIST 2013, and

SAC 2013 papers.

Download

MOBUCON is currently under development inside

ProM 6. Two tester applications, based on

jREC Tester, are available. The first

tester supports the specification of ConDec constraints extended with metric temporal conditions. The application is organized in three panels:

-

meta model, containing the Event Calculus formalization of ConDec templates extended with metric temporal conditions;

-

model, containing a list of facts representing the specific ConDec model to be monitored;

-

run, the panel for delivering event occurrences and see the outcome.

See the sample content to have a grasp about the approach.

The second

tester is related to our

SAC 2013 paper, and extends the previous one with non-atomic activities and data-related conditions.

A new version of the tool is likely to come soon.

Acknowledgements

The implementation of MOBUCON has been started by Marco Montali in july 2011, when he was visiting researcher at the Eindhoven University of Technology. A special thank goes to

Wil van der Aalst and

Fabrizio Maggi, and to the Netherlands Organisation for Scientific Research (

NWO), that financially supported the visit.

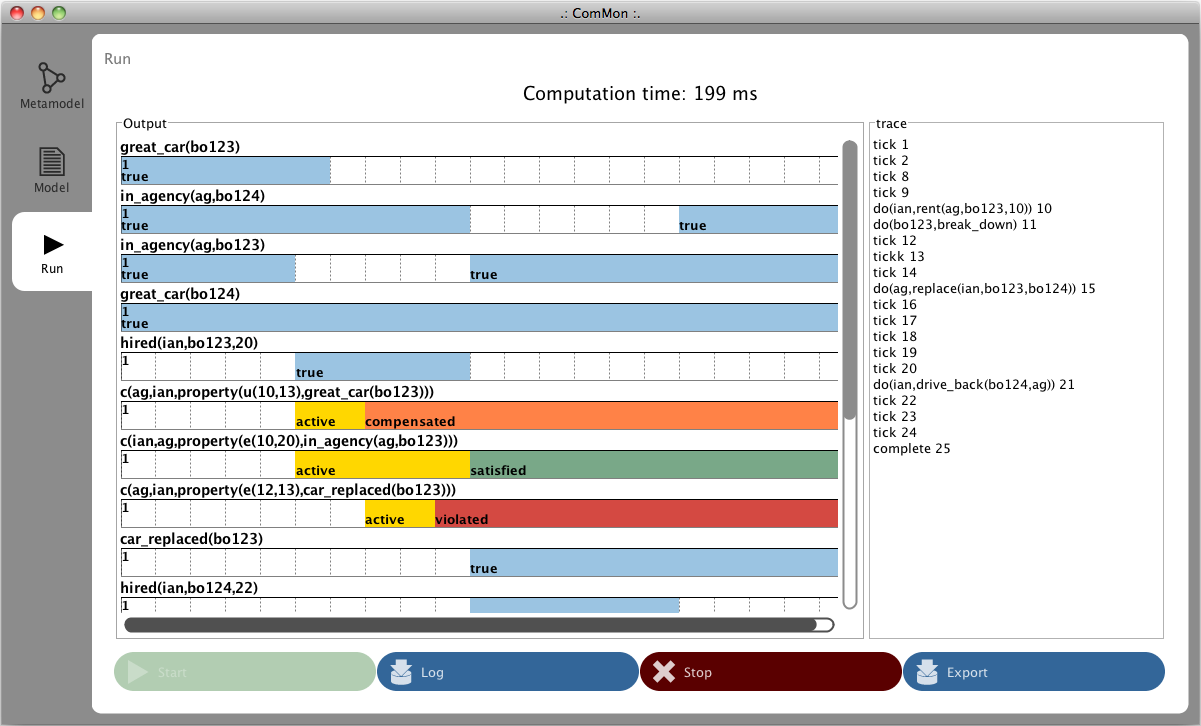

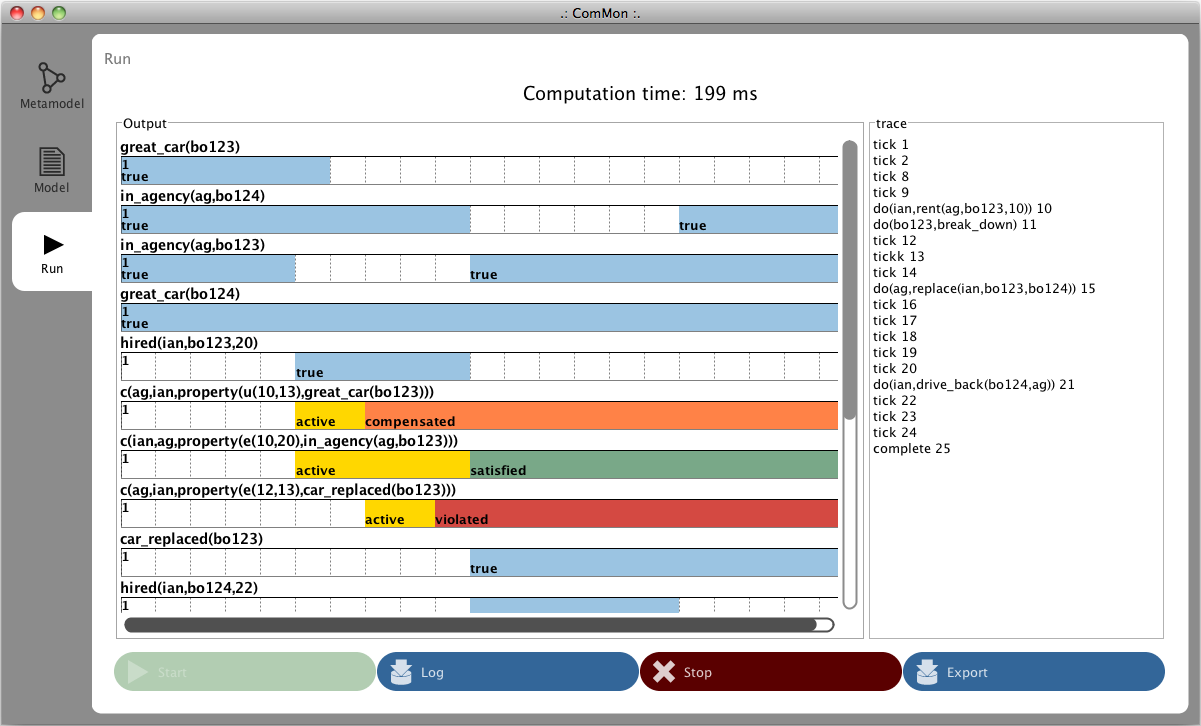

ComMon is a tool for the runtime monitoring of business interactions against social commitments that are mutually established by the interacting stakeholders. More specifically, ComMon is composed of:

- an Event Calculus-based axiomatization of the commitments' lifecycle and the operations by manipulating commitments; the axiomatization stems from the seminal paper of Pinar and Singh (Flexible Protocol Specification and Execution: Applying Event Calculus Planning using Commitments), and extends it by supporting also compensating commitments and (existential and universal) timed commitments, i.e., commitments related to a property that must be brought about inside some time interval, or uninterruptedly maintained true along some time interval;

-

jREC as the underlying reactive reasoner.

A description of the framework can be found in our

AT2AI 2010,

DALT 2011, and

JAAMAS papers.

Download

A tester application for ComMon, based on the jREC Tester, can be downloaded

here. The tester is organized in three panels:

-

meta model, containing the Event Calculus formalization of the lifecycle of timed commitments;

-

model, containing a list of facts representing the specific commitment model to be monitored;

-

run - the panel for delivering event occurrences and see the outcome.

See the sample content to get a glimpse of the approach (a file

trace.txt is provided to show a sample trace).

Acknowledgments

ComMon has been developed by Marco Montali during his PhD and Post-Doc, when he was part of the

AI group at DEIS, University of Bologna.

CLIMB (Computational Logic for the verIfication and Modeling of Business constraints) is a framework based on computational logic (extensions of logic programming in particular) for the declarative, constraint-based specification of event-based systems (such as business processes, service interaction, clinical guidelines, multi-agent interaction protocols), and for providing reasoning and verification support along their entire lifecycle.

CLIMB (Computational Logic for the verIfication and Modeling of Business constraints) is a framework based on computational logic (extensions of logic programming in particular) for the declarative, constraint-based specification of event-based systems (such as business processes, service interaction, clinical guidelines, multi-agent interaction protocols), and for providing reasoning and verification support along their entire lifecycle.

CLIMB is extensively described in my monograph

Specification and Verification of Declarative Open Interaction Models: a Logic-Based Approach.

The framework is constituted by:

- ConDec, a graphical constraint-based language for the declarative and open specification of business constraints. The language has been proposed by Maja Pesic and Wil van der Aalst, and is the core of the DECLARE system.

- SCIFF (based on abductive logic programming) and the Event Calculus as the underlying logic-based formal counterpart. All the ConDec constraints can be automatically translated onto a SCIFF or EC specification.

The following reasoners and tools can be used to provide support along the entire lifecycle of a ConDec specification, or of a third-party interaction model:

- Properties verification, a-priori compliance verification and composition are tackled by the g-SCIFF proof procedure, the generative variant of the SCIFF proof procedure (see Ch. 8, 9, 10 and 11 of the book). A special SCIFF template project (see the SCIFF manual), dealing with the specification and verification of CLIMB constraints, can be downloaded here. The project provides a facility for automatically producing, starting from a simple description of a CLIMB model (see the sample file

model.pl), the corresponding SCIFF representation; it is executed when the project is loaded. Furthermore, it provides a number of utilities for properties verification, such as conflicts detection and discovery of dead activities (see climbUtils.pl).

-

On-the-fly compliance verification is tackled by the SCIFF proof procedure, interleaving it with g-SCIFF in case of speculative runtime verification (see Ch. 13 of the book).

-

Monitoring is tackled by means of the Event Calculus and corresponding runtime reasoners (see Ch. 14 of the book). The currently supported runtime reasoner is jREC, and the monitoring tool for extended ConDec models is MOBUCON. Alternatively, a reactive EC reasoner again based on SCIFF is described in Ch. 14 of the book and in the paper A Logic-Based, Reactive Calculus of Events.

-

Enactment is managed by interleaving monitoring and speculative runtime verification (see Ch. 14 of the book).

-

Declarative process mining (model discovery and trace analysis) are respectively handled by SCIFF or Prolog and by the Inductive Logic Programming algorithm described in the paper Exploiting Inductive Logic Programming Techniques for Declarative Process Mining. Two ProM plug-ins have been developed for dealing with these tasks: SCIFF Checker for conformance checking and DecMiner for model discovery.

Acknowledgments

The CLIMB framework has been investigated by Marco Montali during his PhD and Post-Doc, when he was part of the

AI group at DEIS, University of Bologna. The SCIFF framework has been developed by the AI Groups at DEIS, University of Bologna, and ENDIF, University of Ferrara. DecMiner has been developed by

Evelina Lamma,

Fabrizio Riguzzi, and Sergio Storari (AI Group at ENDIF, University of Ferrara). Part of this investigation has been carried out visiting the

AIS group at the Eindhoven University of Technology, and in particular collaborating with Prof.

Wil van der Aalst, Maja Pesic and

Fabrizio Maggi.

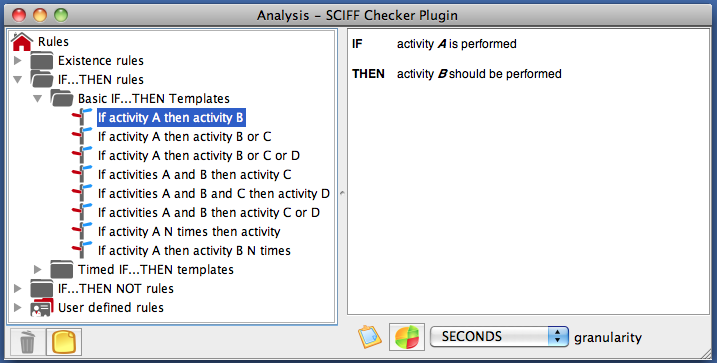

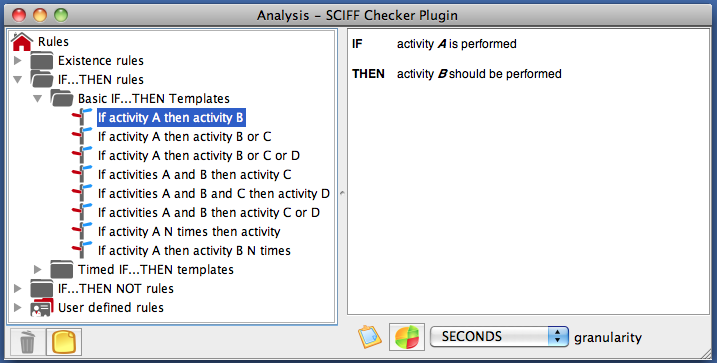

SCIFF Checker is a process mining tool for conformance checking and ex-post analysis of event logs. It is implemented in JAVA+Prolog as a plug-in of ProM. The plug-in classifies the execution traces contained inside an event log as compliant or noncompliant with respect to a business rule. Rules are expressed in a pseudo-natural language notation and are inspired by the patterns of the ConDec language extended with metric time constraints and originators.

|

Step 1

|

The user selects an abstract business rule from a set of templates. Rules contain event patterns and expectations, which are in turn associated to an activity name, an event type (e.g. start, complete, abort of an activity), an originator and an execution time.

The user selects an abstract business rule from a set of templates. Rules contain event patterns and expectations, which are in turn associated to an activity name, an event type (e.g. start, complete, abort of an activity), an originator and an execution time.

|

|

Step 2

|

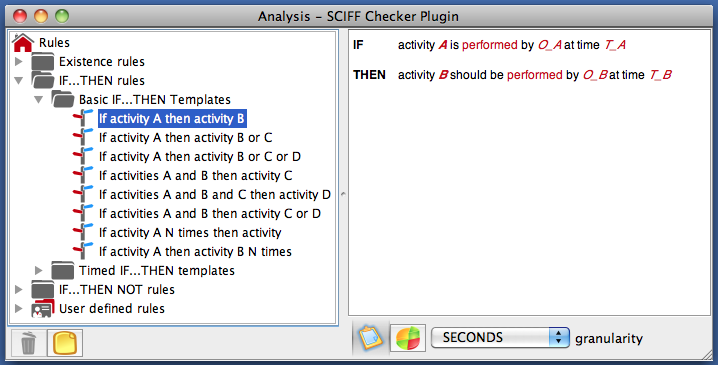

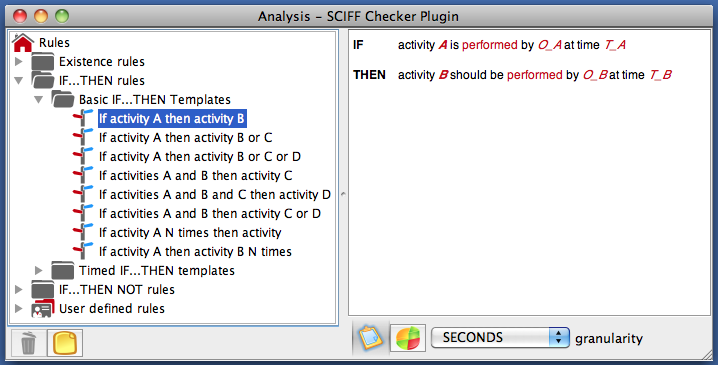

By clicking on the "customize" button, all the editable parts of the rule becomes visible and active. An element can be edited by fixing its value and/or adding specific constraints. Customized rules can be saved for future re-use.

By clicking on the "customize" button, all the editable parts of the rule becomes visible and active. An element can be edited by fixing its value and/or adding specific constraints. Customized rules can be saved for future re-use.

|

|

Step 3

|

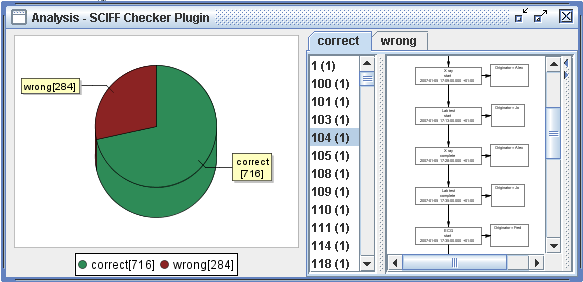

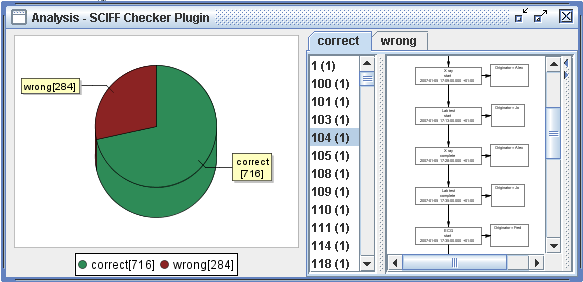

Finally, the user can fix the time granularity used for the analysis and launch the analysis task. Such a task is carried out in Prolog, using the approach described in the paper Checking Compliance of Execution Traces to Business Rules. The final result is a partition of the input log into a compliant and noncompliant set. A new analysis phase can be then carried out by considering the whole log or only one of the two sets. Such sets can also separately feed other plug-ins.

Finally, the user can fix the time granularity used for the analysis and launch the analysis task. Such a task is carried out in Prolog, using the approach described in the paper Checking Compliance of Execution Traces to Business Rules. The final result is a partition of the input log into a compliant and noncompliant set. A new analysis phase can be then carried out by considering the whole log or only one of the two sets. Such sets can also separately feed other plug-ins.

|

Download

The tool is currently under porting on

ProM 6.

The version for

ProM 5 can be downloaded

here. The zip file contains two subfolders, which mirror the ones contained in the ProM distribution:

-

plugins contains the jar of the plug-in (SCIFFChecker.jar) and a subfolder (SCIFFChecker) which includes two xml files that store the abstract rule templates and the user-defined rules. Both the jar and the subfolder must be copied into the <Prom Distribution>/lib/plugins/ folder of ProM.

-

external contains the external libraries required by SCIFF Checker, i.e. TuProlog and JFreeChart, which in turn requires JCommon. All these three jar files must be copied into the <Prom Distribution>/lib/external/ folder of ProM.

The last step to make SCIFF Checker work is to modify the property file

<ProM Distribution>/analyses.ini, adding a new line

<NEW KEY>=org.processmining.analysis.sciffchecker.SCIFFChecker

where

<NEW KEY> is a unique alpha-numeric key. SCIFF Checker will consequently appear inside the

analysis menu of ProM.

Acknowledgements

SCIFF Checker has been developed by Marco Montali in the context of a research contract within the

AI group at DEIS, University of Bologna.

The Prolog-based analysis task is a joint work with

Federico Chesani and Paola Mello (AI group at DEIS, University of Bologna) and

Fabrizio Riguzzi (AI group at ENDIF, University of Ferrara).